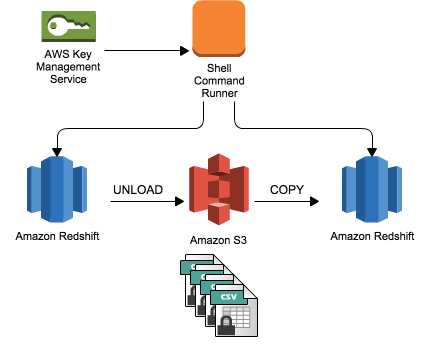

Select regexp_count('abcdefghijklmnopqrstuvwxyz', '') For example, the following SELECT statement counts the number of times a three-letter sequence occurs: The new REGEX_COUNT function returns an integer that indicates the number of times a regular expression pattern occurs in the string. For example, the following SELECT statement retrieves the portion of an email address between the character and the top-level domain name: The new REGEX_SUBSTR function extracts a substring from a string, as specified by a regular expression. We know that this change will be of special interest to Redshift users who are also making use of data visualization and analytical products from Tableau. Larger values will result in increased memory consumption be sure to read the documentation on Cursor Constraints before making any changes. You can now configure the cursor counts and result set sizes. For example, workloads that contain a mix of many small, quick queries and a few, long-running queries can be served by a pair of queues, using one with a high level of concurrency for the small, quick queries and another with a different level of concurrency for long-running queries. Increasing the level of concurrency will allow you to increase query performance for some types of workloads. Each slot in a queue is allocated an equal, fixed share of the server memory allocated to the queue. You can now configure a maximum of 50 simultaneous queries across all of your queues. If, for example, you unload 13.4 GB of data, UNLOAD automatically will create the following three files: If PARALLEL is OFF or FALSE, UNLOAD writes to one or more data files serially, limiting the size of each S3 object to 6.2 Gigabytes. Option can be either PARALLEL ON or PARALLEL OFF.īy default, UNLOAD writes data in parallel to multiple files, according to the number of slices in the cluster. You can now use UNLOAD to upload the result of a query to one or more Amazon S3 files: Then you can use a Redshift COPY command to copy fixed-width files, character-delimited files, CSV files, and JSON-formatted files to Redshift.ĭocumentation: Loading Data From Amazon EMR In order to do this you first need to transfer your Redshift cluster’s public key and the IP addresses of the cluster nodes to the EC2 hosts in the Elastic MapReduce cluster.

You can now copy data from an Elastic MapReduce cluster to a Redshift cluster. JSON AS 's3://mybucket/venue_jsonpaths.json' Here’s a sample file:Īnd here’s a COPY command which references the jsonpaths file and the JSON data, both of which are stored in Amazon S3:ĬOPY venue FROM 's3://mybucket/venue.json'Ĭredentials 'aws_access_key_id=ACCESS-KEY-ID aws_secret_access_key=SECRET-ACCESS-KEY' This gives you the power to map the hierarchically data in the JSON file to the flat array of columns used by Redshift. When you use this new option, you can specify the mapping of JSON elements to Redshift column names in a jsonpaths file. Many devices, event handling systems, servers, and games generate data in this format. You can now load data in JSON format directly into Redshift, without preprocessing. We have added support for twelve powerful and important features over the past month or so. Because Redshift is a managed service, you can focus on your data and your analytics, while Redshift takes care of the infrastructure for you. For more information or to get started with Amazon Redshift, see the () or read this ().Amazon Redshift makes it easy for you to launch a data warehouse. Refer to the (/about-aws/global-infrastructure/regional-product-services/) for Amazon Redshift availability. Support for exporting JSON data using UNLOAD is available in all AWS commercial Regions. Amazon Redshift supports writing nested JSON data when your query result contains columns using SUPER, the native Amazon Redshift data type to store semi-structured data or documents as values. Using JSON format with your UNLOAD statement, you can write your query results to JSON files with each line containing a JSON object, representing a full record in the query result.

With the UNLOAD command in Amazon Redshift, you can now use JSON in addition to already supported delimited text, CSV, and Apache Parquet formats. (/redshift/) adds support for unloading SQL query results to Amazon S3 in JSON format, a lightweight and widely used data format that supports schema definition.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed